Advancing Surgical AI: The Power of 3D Depth in Vision Foundation Models

Explore how incorporating 3D depth information into AI foundation models is revolutionizing surgical vision, leading to more accurate, data-efficient, and easily deployable systems for healthcare.

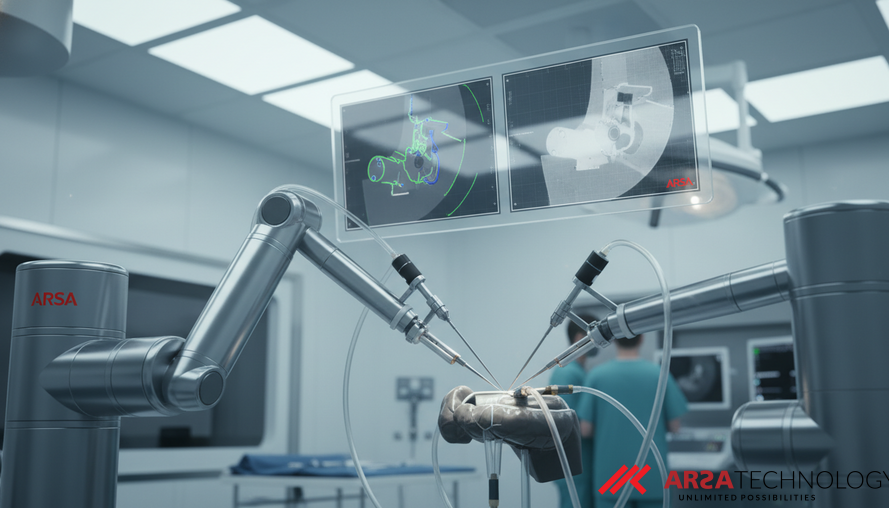

Unlocking Deeper Insights: The Future of AI in Surgical Vision

Artificial intelligence is rapidly transforming computer-assisted interventions, especially in complex fields like robotic surgery. From identifying specific surgical tools to understanding intricate tissue movements, AI-powered computer vision systems are becoming indispensable. These systems aim to provide precise, data-driven support, enhancing safety and efficiency in the operating room. However, a significant challenge persists: many of these advanced AI models primarily rely on standard color images (RGB), often overlooking the crucial third dimension—depth. This can limit their ability to fully grasp the intricate 3D geometry of the surgical environment, hindering fine-grained understanding and predictive capabilities.

A groundbreaking empirical study, "On the Role of Depth in Surgical Vision Foundation Models: An Empirical Study of RGB-D Pre-training," delves into this gap, investigating how integrating depth information during the AI model's initial training phase can dramatically improve its performance. This research explores various AI architectures and training methodologies, demonstrating that models incorporating 3D spatial awareness—derived from both color and depth data—significantly outperform their color-only counterparts. These findings open new avenues for building more robust and intelligent surgical vision systems, promising a future where AI understands not just "what" is happening, but also "where" and "how" in the surgical field.

The Critical Role of 3D Geometry in Surgical AI

In robot-assisted minimally invasive surgery (RAMIS), visual and spatial intelligence are paramount. High-precision tasks like 3D reconstruction of organs, natural language interfaces for surgical assistance, and even autonomous surgical capabilities all demand an AI that can deeply understand its surroundings. Current vision foundation models (VFMs)—large AI models pre-trained on vast datasets to learn general visual patterns—have shown immense potential. Yet, the majority of these models are pre-trained using only RGB images, which, while effective for broad tasks like recognizing a surgical tool, often fall short when precise spatial and geometric understanding is required. They excel at "what is happening" but struggle with the "where" in a complex 3D scene.

Imagine trying to navigate a detailed blueprint with only a flat photograph. While you see the objects, you lack the crucial depth perception that tells you how far apart they are or their precise spatial relationships. This is the limitation of RGB-only models in surgery. The study emphasizes that explicit geometric information, such as that provided by depth maps, can substantially enhance an AI's comprehension of a scene. By combining standard color imagery with aligned depth maps (creating an RGB-D input), AI models can develop a richer, more physically aware representation of the surgical environment. This holistic view is vital for tasks demanding fine-grained spatial reasoning, such as accurately segmenting delicate tissues or estimating the exact pose of an instrument.

How Multimodal AI Models Learn Better: The RGB-D Advantage

The core of this research lies in its novel approach to pre-training Vision Foundation Models (VFMs). Unlike traditional methods that only feed color images, this study systematically compares eight VFM architectures (specifically, ViT-based models) that are pre-trained using both RGB and depth information (RGB-D). This "multimodal" approach involves providing the AI with a curated dataset of 1.4 million robotic surgical images, each paired with a corresponding depth map generated by an advanced off-the-shelf network. The depth maps effectively tell the AI how far each pixel is from the camera, adding a critical layer of spatial data.

The researchers leveraged self-supervised learning (SSL) as the pre-training paradigm. SSL allows AI models to learn from raw, unlabeled data by performing "pretext tasks"—such as predicting masked-out sections of an image—rather than relying on expensive, human-generated labels. This is particularly advantageous in the surgical domain, where expert annotation is both costly and difficult to obtain due to privacy concerns and the specialized nature of the content. A key innovation highlighted in the study is the use of models incorporating "explicit geometric tokenization," like MultiMAE. This means the AI is specifically trained to encode and understand the 3D shape and spatial relationships of objects, not just their color and texture. The study demonstrates that these geometry-aware models consistently outperform conventional RGB-only models across diverse tasks, including object detection, semantic segmentation, depth estimation, and pose estimation.

Remarkable Efficiency and Practical Deployment

The findings of this empirical study hold significant practical implications for the adoption of AI in surgical settings. Perhaps most notably, models that undergo geometry-aware pre-training exhibit remarkable data efficiency. The research reveals that these multimodal models, when fine-tuned on just 25% of labeled data, consistently surpass the performance of RGB-only models that were trained on the full dataset. This translates directly into substantial cost and time savings, as the need for extensive and highly specialized surgical data annotation—a major bottleneck in healthcare AI development—is significantly reduced. For organizations like ARSA Technology, who provide AI Video Analytics solutions across various industries, this data efficiency means faster development cycles and more agile deployments for their clients.

Furthermore, a critical aspect for real-world integration is that these performance gains require no architectural or runtime changes at the inference stage. This means that once the model is trained, it can be deployed on existing hardware and software infrastructure without needing new camera systems or real-time depth sensors. The depth information is exclusively utilized during the initial pre-training phase, making adoption straightforward and minimizing additional investment for healthcare providers. This seamless integration capability dramatically lowers the barrier to entry for hospitals and clinics looking to enhance their surgical systems with more intelligent and spatially aware AI.

Pioneering Smarter Surgical Systems

The empirical study’s compelling evidence strongly suggests that multimodal pre-training, incorporating 3D depth information, is a highly viable and impactful path toward developing more capable and reliable surgical vision systems. By enabling AI to perceive and understand the intricate 3D environment of an operating room with greater accuracy and efficiency, this research paves the way for a new generation of intelligent robotic surgery applications. These advancements can lead to:

- Enhanced Safety: More accurate instrument tracking, better anomaly detection, and superior collision avoidance.

- Improved Surgical Outcomes: AI-assisted precision in complex procedures.

- Reduced Operational Costs: Lowered expenses through data-efficient training and streamlined deployment.

- Accelerated Innovation: Faster development of new AI-driven surgical capabilities.

The work done by John J. Han and his colleagues at Vanderbilt University and Intuitive Surgical Inc. (as detailed in their paper "On the Role of Depth in Surgical Vision Foundation Models: An Empirical Study of RGB-D Pre-training") provides a robust framework for integrating geometric understanding into AI, offering a tangible leap forward for medical technology.

To explore how advanced AI and IoT solutions can transform your operations and to discuss implementing intelligent vision systems, we invite you to contact ARSA for a free consultation.