Enhancing Autonomous Vehicle Safety: AI-Generated Fault Scenarios for Edge Systems

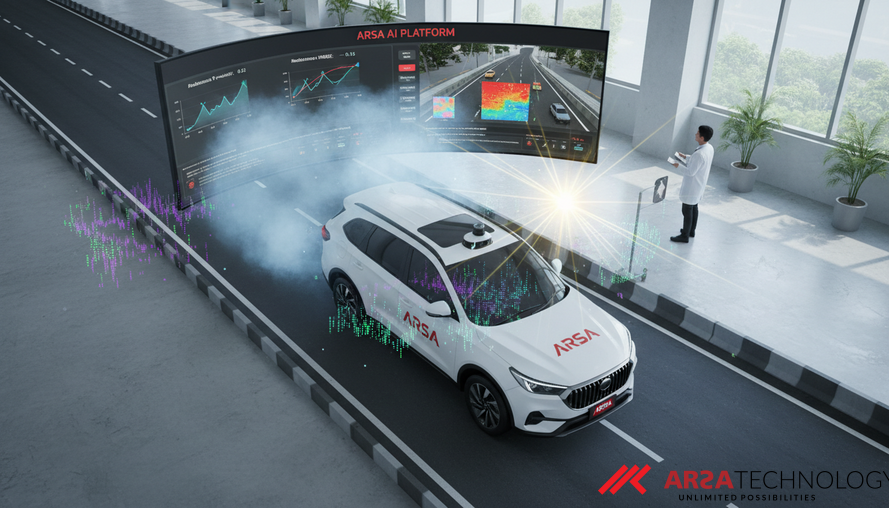

Explore ARSA Technology's innovative approach to autonomous vehicle safety, using AI-generated fault scenarios for robust perception-driven lane following in resource-constrained edge systems.

Autonomous vehicles and advanced robotic systems are increasingly relying on artificial intelligence (AI) for critical functions like lane following and obstacle detection. While these systems excel under ideal conditions, their performance can degrade significantly when faced with real-world challenges such as sensor malfunctions, harsh weather, or unexpected lighting changes. Ensuring predictable and safe operation under these varied fault conditions is paramount before mass deployment. Traditional validation methods often fall short, struggling to replicate the vast array of environmental hazards encountered in actual use.

The Unseen Challenges of Autonomous Edge AI

The deployment of autonomous vision systems on edge devices, such as those found in self-driving cars or industrial robots, presents a significant dilemma. These devices, designed for compact size and power efficiency, often have limited computational resources. This constraint makes it challenging to perform the extensive, real-time safety tests necessary to guarantee robust performance across all possible scenarios. Existing validation approaches, which may rely on static datasets or labor-intensive manual fault injection, typically fail to capture the dynamic and diverse environmental hazards that autonomous systems face daily. Such methods are often reactive, only identifying failures after they occur, thereby lacking the capacity for proactive evaluation and early warning. Furthermore, conducting real-world tests under adverse or degraded conditions is both expensive and potentially hazardous, especially when deploying sophisticated hardware. For these reasons, advanced AI models like Large Language Models (LLMs) and Latent Diffusion Models (LDMs), which demand substantial computational power, are impractical for direct, real-time deployment on these resource-limited edge platforms.

Bridging the Gap: A Decoupled Approach to AI Validation

To overcome these limitations, a novel virtual testing framework has emerged, utilizing a two-phase decoupled architecture, as discussed in the paper "LLM-Generated Fault Scenarios for Evaluating Perception-Driven Lane Following in Autonomous Edge Systems" by Pasandideh and Rettberg (Source: https://arxiv.org/abs/2604.07362). The core principle behind this framework is that computationally intensive AI models do not need to run directly on the edge device itself. Instead, these heavy processing tasks can be offloaded to an offline, high-performance computing environment, or the cloud, to generate fault scenarios and precompute system responses. This allows the edge device to perform a lightweight "lookup" at runtime, assessing fault conditions with minimal latency. This strategic separation allows for comprehensive, AI-driven safety evaluation without compromising the operational constraints of resource-limited edge hardware, making robust AI deployment more feasible for practical autonomous systems. For example, ARSA Technology offers AI Box Series systems that perform on-device processing, perfect for such edge deployments where pre-analyzed insights are critical.

Generating Realistic Fault Scenarios with Advanced AI

The offline phase of this framework harnesses the power of advanced generative AI to create highly realistic and diverse fault scenarios. Large Language Models (LLMs) are employed to generate semantically rich descriptions of potential fault conditions, such as "heavy fog reducing visibility" or "sensor glare from a low sun." These textual descriptions are then fed into Latent Diffusion Models (LDMs), which synthesize corresponding high-fidelity faulty images. These images realistically simulate visual degradations described by the LLMs, going beyond simple, predefined perturbations. Vision-Language Models (VLMs) further validate the coherence between the generated scenarios and images, and crucially, predict the lane-following performance under each fault. The comprehensive output of this phase – encompassing fault descriptions, synthetic images, and predicted performance metrics – is then compiled into a structured lookup table. This ensures that the generated scenarios are not only diverse but also directly relevant to real-world driving conditions, significantly enhancing the depth of robustness evaluation.

Real-Time Robustness on the Edge: How it Works

The real-time operational benefits become evident in the online phase. Once the autonomous system is deployed on an edge device, such as an NVIDIA Jetson, it no longer needs to run complex generative AI models. Instead, it queries the precomputed lookup table. When the system encounters specific environmental conditions or detects potential degradations, it can instantly reference this table to understand how it should perform or how its perception model might be affected. This enables real-time fault-aware inference with minimal runtime overhead, ensuring that the autonomous system maintains critical operational speed and responsiveness. This approach also aligns with strict data privacy and compliance requirements, as sensitive raw data streams do not need to be transferred to the cloud for heavy processing, remaining within the local infrastructure. Solutions like ARSA's AI Video Analytics are designed with similar principles, offering on-premise processing for security and real-time insights in various industrial applications.

Demonstrating Impact: Key Findings and Significance

Extensive validation of this framework involved a ResNet18 lane-following model tested across 460 diverse fault scenarios. The results were compelling: while the model achieved a strong baseline R² value of approximately 0.85 on clean, normal data, the generated faults exposed significant degradation in its robustness. The Root Mean Squared Error (RMSE), a measure of prediction accuracy, increased by as much as 99% under certain conditions. More strikingly, localization accuracy—the ability to pinpoint the lane precisely—plummeted to as low as 31.0% under simulated fog. These findings unequivocally demonstrate that evaluating autonomous systems solely on nominal conditions is insufficient for real-world edge AI deployment. The framework highlights critical vulnerabilities that traditional testing might miss, providing invaluable insights into where and how systems need to be hardened. For enterprises, this translates to tangible business implications, including reduced operational risks, enhanced safety compliance, and the ability to confidently deploy AI solutions that perform reliably even in challenging environments. ARSA, with expertise since 2018 in developing and deploying practical AI, helps organizations navigate these complexities.

Conclusion: Paving the Way for Safer Autonomous Systems

The integration of LLM-generated fault scenarios and LDM-synthesized visual degradations within a decoupled offline-online framework represents a significant leap forward in validating perception-driven autonomous systems. By enabling comprehensive, proactive safety testing without overburdening edge devices, this methodology paves the way for more robust, reliable, and ultimately safer AI deployments. It moves beyond theoretical discussions to provide a practical, scalable solution for addressing the critical challenges of real-world operational reliability in autonomous technologies.

Ready to explore how advanced AI and IoT solutions can enhance safety and performance in your operations? Contact ARSA today for a free consultation.

Source: Pasandideh, F., & Rettberg, A. (2026). LLM-Generated Fault Scenarios for Evaluating Perception-Driven Lane Following in Autonomous Edge Systems. arXiv preprint arXiv:2604.07362. https://arxiv.org/abs/2604.07362