Revolutionizing Industrial Quality Control: The Power of AI-Powered Few-Shot Anomaly Detection

Explore VTFusion, an AI framework transforming industrial inspection with vision-text multimodal fusion for few-shot anomaly detection. Enhance quality control, reduce costs, and identify anomalies with unprecedented accuracy in real-world scenarios.

Introduction: The Critical Need for Advanced Anomaly Detection

Anomaly detection (AD) plays a pivotal role across numerous industries, serving as the frontline defense against irregularities and ensuring operational excellence. In sectors like manufacturing, logistics, and healthcare, the ability to swiftly identify deviations from normal patterns is crucial for maintaining product quality, upholding safety standards, and preventing costly downtimes. However, conventional anomaly detection methods often face significant hurdles. They typically require vast amounts of historical data, including many examples of what constitutes "abnormal" – data that is inherently rare and challenging to collect in real-world industrial environments. This scarcity makes building robust detection models a complex and resource-intensive endeavor.

The demand for more efficient and adaptable solutions has propelled the rise of Few-Shot Anomaly Detection (FSAD). This advanced paradigm tackles the challenge by developing detection models that rely on only a small number of normal reference data points, sidestepping the need for extensive anomalous examples. FSAD is particularly valuable in dynamic industrial settings where new products or defect types emerge frequently, and immediate adaptation is necessary.

Bridging the Gap: Challenges in Current Few-Shot Anomaly Detection

Traditional FSAD approaches have typically fallen into two categories: vision-based and multimodal-based. Vision-based frameworks, while leveraging visual information like texture and shape to create "normal" templates, often struggle to comprehend the nuanced characteristics of anomalies without direct exposure to defect examples. This can lead to ambiguous decision boundaries, making it difficult to distinguish subtle irregularities from normal variations.

More recently, multimodal frameworks have emerged, attempting to incorporate textual semantics to enrich visual data. These often utilize powerful pre-trained vision-language models (VLMs) like CLIP, which have learned broad "commonsense" knowledge from vast internet datasets of images and text. The idea is to use text descriptions of "normal" and "abnormal" traits to guide visual analysis. However, a significant "domain gap" exists: models trained on diverse natural images often don't translate effectively to the specific, granular details of industrial inspection (e.g., detecting a microscopic scratch on a metal surface versus recognizing a cat in a photo). Furthermore, simply combining visual and textual predictions through basic concatenation can lead to "semantic misalignment," where the different types of information interfere rather than complement each other, compromising detection accuracy and robustness (as highlighted in research by Jiang et al., IEEE Transactions on Cybernetics).

VTFusion: A New Paradigm for Vision-Text Multimodal Fusion

To overcome these limitations, researchers have introduced VTFusion, a novel vision-text multimodal fusion framework specifically designed for Few-Shot Anomaly Detection. VTFusion is engineered to leverage the complementary strengths of both visual and textual information, ensuring a comprehensive understanding of anomaly patterns in complex industrial scenarios. This innovative framework centers on two core designs that allow it to adapt to demanding environments and deliver precise, actionable insights.

The first critical design involves the development of adaptive feature extractors for both image and text modalities. These extractors are not static but are trained to learn task-specific representations, effectively bridging the inherent domain gap between generic pre-trained models and the highly specialized nature of industrial data. The second core design introduces a dedicated multimodal prediction fusion module, moving beyond superficial concatenation. This module is built to facilitate rich, cross-modal information exchange, ensuring that commonsense knowledge from text intelligently interacts with domain-specific visual cues. This sophisticated fusion process culminates in a segmentation network that generates highly refined, pixel-level anomaly maps, providing unprecedented detail for industrial inspection tasks.

Enhanced Feature Learning for Industrial Precision

A cornerstone of VTFusion's effectiveness lies in its ability to adapt and refine feature extraction for industrial contexts. The framework integrates adaptive components into pre-trained models, allowing them to better understand and represent the subtle characteristics unique to industrial scenes. This customization ensures that the features extracted are not just general visual or textual descriptions, but are specifically relevant for distinguishing between normal and anomalous industrial components.

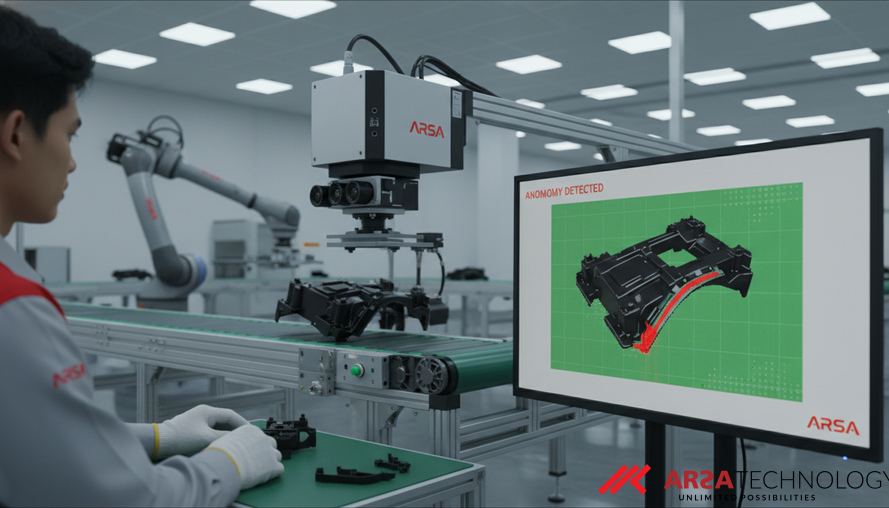

Beyond adaptation, VTFusion further augments its feature discriminability by generating diverse synthetic anomalies. By creating a variety of artificial defect patterns during the training phase, the model learns to establish more compact and distinct distributions for normal features. This explicit separation helps to define clearer boundaries between what is normal and what constitutes an anomaly, even with limited real-world anomaly examples. The result is a more robust understanding of potential defects, allowing the system to flag even novel or previously unseen irregularities with higher confidence and accuracy. This capability is vital for industries where new defect types can emerge, and timely detection is paramount for quality control and safety, such as with ARSA Technology's Product Defect Detection solutions.

Intelligent Fusion for Pixel-Level Accuracy

The true innovation of VTFusion extends to its unique approach to multimodal information synthesis. Unlike previous methods that simply combine individual predictions, VTFusion employs a specialized multimodal prediction fusion module. This module acts as an intelligent intermediary, actively facilitating semantic alignment and rich information exchange between the visual features (what the camera "sees") and the text-guided insights (what the system "knows" about normal/abnormal properties). This intricate interaction helps to mitigate "cross-modal interference," a common issue where differing information from modalities can clash rather than cooperate.

The fusion module then feeds into a sophisticated segmentation network. This network, trained with precise pixel-level supervision, goes beyond merely classifying an image as anomalous. It meticulously pinpoints the exact location and extent of anomalies, generating fine-grained, pixel-level anomaly maps. For industrial applications, this means an operator isn't just told "this part is defective," but rather "there is a crack spanning these specific pixels on this component." This level of detail is critical for quality assurance, allowing for immediate corrective actions, precise defect tracking, and optimized rework processes. Companies leveraging advanced solutions like ARSA AI Video Analytics can transform passive surveillance into active business intelligence by implementing such detailed visual insights.

Real-World Impact and Measurable Results

The effectiveness of VTFusion has been rigorously validated through extensive evaluations on prominent anomaly detection datasets and, crucially, a real-world industrial application. On the MVTec AD and VisA datasets, which feature a wide array of industrial inspection challenges, VTFusion achieved impressive image-level AUROCs (Area Under the Receiver Operating Characteristic curve) of 96.8% and 86.2% respectively in the challenging 2-shot scenario. An AUROC score close to 100% indicates excellent discriminatory power, meaning the system is highly effective at distinguishing between normal and anomalous items.

Even more compelling is VTFusion's performance on a real-world dataset comprising industrial automotive plastic parts. Here, it achieved an AUPRO (Area Under the Per-Region Overlap curve) of 93.5%. AUPRO is a critical metric for industrial inspection because it measures the precision of pixel-level anomaly localization, not just overall image classification. This high score underscores VTFusion's practical applicability in demanding industrial settings where precise identification of even minute defects is paramount. Such performance translates directly to tangible business outcomes, significantly enhancing product quality, reducing manufacturing waste, and safeguarding brand reputation. These innovations contribute to the kind of "Faster. Safer. Smarter." operations ARSA Technology strives to enable for its clients across various industries.

Transforming Industrial Quality Control with AI

The advancements embodied by VTFusion represent a significant leap forward for industrial quality control. By precisely detecting and localizing anomalies with minimal prior examples, businesses can realize substantial benefits. This includes reduced operational costs by minimizing manual inspection labor, faster throughput by automating quality checks, and improved product reliability that enhances customer satisfaction and reduces warranty claims. Furthermore, the ability to rapidly adapt to new defect types or production line changes provides a crucial competitive edge in today's fast-paced manufacturing landscape.

For enterprises aiming to achieve superior product quality and operational efficiency, integrating cutting-edge AI for anomaly detection is no longer an option but a necessity. Solutions that leverage such robust multimodal AI can act as an invaluable asset, ensuring consistent excellence and fostering continuous improvement within production processes.

Conclusion: The Future of Industrial Inspection

The VTFusion framework marks a pivotal advancement in Few-Shot Anomaly Detection, successfully addressing the critical challenges of domain generalization and effective multimodal fusion in industrial contexts. By combining adaptive feature learning with an intelligent prediction fusion module, it sets a new standard for accuracy and precision in identifying manufacturing defects and other anomalies. This technology offers a clear path for industries to enhance their quality control, reduce costs, and accelerate their journey towards fully automated, intelligent operations. As global industries continue to push the boundaries of efficiency and quality, AI-powered solutions like those offered by ARSA Technology will be indispensable tools in building the future of industrial inspection.

Source: Jiang et al., IEEE Transactions on Cybernetics

Ready to enhance your industrial inspection and quality control processes with AI? Explore ARSA Technology's specialized solutions and contact ARSA today for a free consultation.