DART: Revolutionizing Edge AI with Input-Difficulty-Aware Adaptive Thresholds

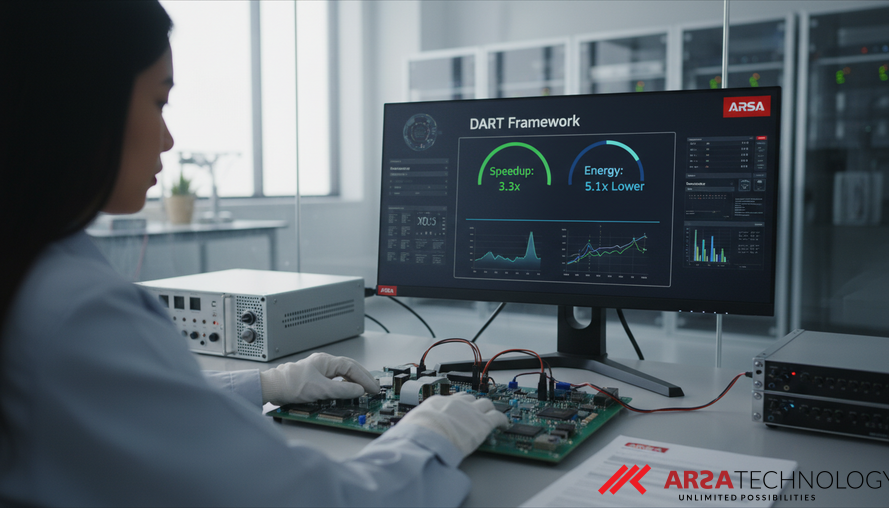

Explore DART, a framework for Early-Exit DNNs, which enhances AI efficiency on edge devices by adapting computation to input difficulty. Learn how it achieves up to 3.3x speedup and 5.1x lower energy consumption.

The Challenge of Edge AI: Balancing Performance and Resources

The promise of Artificial Intelligence (AI) at the edge—deploying intelligent systems directly on devices like cameras, sensors, and drones—is immense. It enables real-time decision-making, enhanced privacy, and reduced reliance on cloud infrastructure. However, traditional Deep Neural Networks (DNNs) were not designed for the stringent resource constraints of edge devices. They often consume significant energy and computational power, processing every input with the same intensity, regardless of whether the task is simple or complex. This "one-size-fits-all" approach leads to inefficiencies, limiting the widespread adoption of advanced AI in resource-constrained environments.

To combat this, the concept of Dynamic Deep Neural Networks (D2NNs), particularly Early-Exit DNNs, has emerged. These networks are engineered to adapt their computational effort based on the input, allowing them to exit early when a high-confidence prediction is achieved. This dramatically reduces latency and energy consumption. However, previous attempts at early-exit strategies have often fallen short, relying on static thresholds or computationally heavy methods that undermine the very efficiency they aim to achieve. Addressing these limitations, a new framework called DART (Input-Difficulty-AwaRe Adaptive Threshold) has been introduced, offering a more intelligent and efficient approach to adaptive AI inference, as detailed in a forthcoming paper accepted at the 12th International Conference on Computing and Artificial Intelligence (ICCAI) 2026.

DART’s Foundational Innovations for Adaptive AI

DART distinguishes itself by introducing three key innovations designed to create genuinely adaptive and efficient early-exit DNNs. First, it incorporates a lightweight difficulty estimation module that quantifies the complexity of each input with minimal computational overhead. This is crucial because knowing an input's inherent difficulty allows the network to decide how deeply it needs to process the data. Second, DART employs a joint exit policy optimization algorithm based on dynamic programming. Unlike previous methods that optimize each exit independently, DART considers all potential exit points simultaneously, leading to more globally coherent and optimal routing decisions. Finally, an adaptive coefficient management system ensures continuous refinement and online learning, allowing the network to adjust its behavior during deployment for sustained efficiency.

This unified framework directly tackles persistent challenges in dynamic neural networks, making them more robust and practical for real-world applications. For enterprises looking to deploy AI video analytics or other sophisticated AI models on edge devices, DART's methodology translates directly into more reliable and cost-effective operations, offering a significant leap forward in intelligent system design.

Quantifying Input Complexity with Multi-Modal Metrics

The cornerstone of DART's adaptive behavior is its innovative approach to difficulty-aware input processing. This module combines multiple metrics to provide a nuanced understanding of an input's complexity, ensuring that the network processes data only as much as necessary.

- Edge Density Computation: This metric assesses the structural complexity of an input image. Imagine converting an image to grayscale and then identifying all the sharp lines or "edges" within it. Edge density measures how many of these strong lines are present, indicating a busy or detailed scene. A high edge density suggests a more complex image requiring deeper analysis.

- Pixel Variance Analysis: Capturing the texture complexity and local variations, pixel variance looks at how much the colors or brightness change from one pixel to its neighbors. A low variance might indicate a smooth, uniform area, while high variance points to rich textures and intricate patterns that demand more attention from the neural network.

- Gradient Complexity Assessment: This goes a step further than simple edge detection by utilizing Laplacian operators to pinpoint fine-grained patterns and subtle changes in the image. It helps detect details that might be missed by coarser analysis, providing a deeper layer of complexity understanding.

These three metrics are then combined using a weighted fusion technique to produce a single "difficulty score" between 0 and 1. This score acts as a guide, signaling to the network whether an input is simple enough for an early exit or if it requires full computational depth. This precise, yet lightweight, assessment of input difficulty is what allows DART-enabled networks to achieve their impressive efficiency gains.

Optimizing Exit Strategies and Measuring True Efficiency

Beyond merely identifying input difficulty, DART’s joint exit policy optimization is crucial. Instead of tuning each exit point in isolation, which can lead to suboptimal overall performance, DART uses dynamic programming to optimize all exit thresholds simultaneously. This ensures that the network makes globally coherent decisions, maximizing the trade-off between accuracy and computational cost across the entire network. This holistic approach is vital for achieving the substantial efficiency gains seen in practical deployments.

To truly measure the effectiveness of such dynamic networks, traditional metrics like accuracy and speedup are insufficient. The authors of the DART paper introduced the Difficulty-Aware Efficiency Score (DAES). This novel multi-objective metric combines accuracy, computational speedup, and power efficiency, while explicitly factoring in the input's difficulty. By dividing the product of accuracy, speedup, and power efficiency by (1 + difficulty score), DAES provides a comprehensive evaluation that rewards models for balancing performance across varying input complexities. A higher DAES indicates a more robust and efficient model in heterogeneous real-world conditions, making it an invaluable tool for enterprises like ARSA Technology's AI BOX - Traffic Monitor deployments that need consistent performance across diverse traffic conditions.

Practical Applications and Measurable Impact

The experimental results for DART are compelling, demonstrating its potential to transform edge AI deployments. Across diverse DNN benchmarks such as AlexNet, ResNet-18, and VGG-16, DART achieved up to a 3.3 times speedup, 5.1 times lower energy consumption, and up to 42% lower average power compared to static networks, all while maintaining competitive accuracy. For example, in smart city applications where countless cameras continuously monitor traffic or public safety, such efficiency gains mean significantly reduced operational costs and extended device lifespans for intelligent systems.

While DART shows immense promise for conventional Convolutional Neural Networks (CNNs), its application to Vision Transformers (like LeViT) yielded power and execution-time gains (5.0x and 3.6x respectively) but also an accuracy loss of up to 17%. This highlights an important area for future research: the need for specialized early-exit mechanisms tailored to the unique architectures of transformers. Despite this, for many current enterprise applications, DART offers a ready-to-deploy solution that can drastically improve the efficiency of existing AI infrastructure. Enterprises across various industries are increasingly seeking efficient ways to manage their AI inference workloads without compromising performance or privacy, making DART's approach highly relevant.

Conclusion: The Future of Efficient Edge AI

DART represents a significant step forward in making AI more practical and sustainable for edge deployments. By intelligently adapting computational effort to the inherent difficulty of each input, DART not only delivers impressive speedup and energy savings but also introduces a robust way to evaluate these complex systems through the DAES metric. For organizations striving for operational excellence and considering AI solutions like those offered by ARSA Technology, frameworks like DART pave the way for more efficient, reliable, and cost-effective intelligent systems.

Source: Patne, P., Taheri, M., Herglotz, C., Jenihhin, M., Krstic, M., & H¨ubner, M. (2026). DART: Input-Difficulty-AwaRe Adaptive Threshold for Early-Exit DNNs. Accepted at the 12th International Conference on Computing and Artificial Intelligence (ICCAI) 2026. Retrieved from https://arxiv.org/abs/2603.12269

Ready to transform your operations with intelligent, efficient AI solutions? Explore ARSA Technology's custom AI and IoT solutions and contact ARSA for a free consultation to discuss your specific needs.